- These list items are microformat entries and are hidden from view.

- https://dltj.org/article/google-scholar-and-books/

- Two papers were published recently exploring the quality of Google Scholar and Google Books.Google ScholarPhilipp Mayr and Anne-Kathrin Walter, both of GESIS / Social Science Information Center in Bonn, Germany, uploaded an article to arXiv called "An exploratory study of Google Scholar." ((Judging from the citation listed on Philipp Mayr's homepage, the article will appear in an upcoming issue of Online Information Review from Emerald Group Publishing.)) Originally created as a presentation for a 2005 conference, it was updated in January 2007 to reflect new findings and published as a paper. Excerpts from the abstract include:The study shows deficiencies in the coverage and up-to-dateness of the [Google Scholar] index. Furthermore, the study points up which web servers are the most important data providers for this search service and which information sources are highly represented. We can show that there is a relatively large gap in Google Scholar’s coverage of German literature as well as weaknesses in the accessibility of Open Access content. Major commercial academic publishers are currently the main data providers. </p>We conclude that Google Scholar has some interesting pros (such as citation analysis and free materials) but the service can not be seen as a substitute for the use of special abstracting and indexing databases and library catalogues due to various weaknesses (such as transparency, coverage and up-to-dateness).The authors performed a "brute force analysis" (their words) of the coverage of Google Scholar by comparing search results by journal title with five journal lists: ISI Arts & Humanities Citation Index, ISI Social Science Citation Index, ISI Science Citation Index, open access journals listed by DOAJ, and journals from the SOLIS database (mainly German-language journals from sociological disciplines). They queried Google Scholar using the "Return articles published in..." limiter on the advanced search screen, downloaded the first 100 records for each title, then parsed and analyzed each of the records. In total, 621,000 records from Google Scholar search results were analyzed.The authors first determined the coverage of titles in the five journal lists in the Google Scholar database. The authors note surprise at the relative lack of coverage for open access titles listed in the DOAJ. I think this can be explained by the fact that many open access publishers are not using a systematic application to put their content on the internet. Of the 2,804 journals in the DOAJ directory, only 846 are searchable via DOAJ's own article-level indexing service. ((Numbers from the DOAJ home page, as of 15-Aug-2007.)) If the journals can't be easily harvested at the article level, then they Google can't add them to the Scholar article index.Based on the semantics provided in each record, the authors divided the results into three categories (referred to in the paper as "document types"): links to complete descriptive records on an external (publisher's or aggregator's) site, citation-only records (no full-text and no link to more complete information at an external site), and direct access links to full text. The distribution of results is shown in the table to the right.The paper also includes an analysis of the various publisher and portal sites that supply information to Google Scholar's index. Google BooksThe August issue of First Monday contains an article by Paul Duguid called "Inheritance and loss? A brief survey of Google Books". The article is a somewhat contrived exploration of the Google Books Library Project through his lens of quality assurance derived "through innovation or through 'inheritance.'" His thesis seems to be that users expect the reputations of the libraries participating in the project (Harvard, University of Michigan, New York Public, Stanford, and Oxford among the other partners are arguably a reputable group) convey a level of quality to the results of the digitization process in the Google Books Library Project. Duguid then goes on to pick what arguably has to be the hardest book artifact to capture digitally (various editions of Laurence Sterne's "The Life and Opinions of Tristram Shandy, Gentleman") as an example of everything that is wrong with Google Books. I don't subscribe to that notion at all, but it is perhaps because I've been around enough technology and innovation to know that each new service needs to stand on its own. Tristram Shandy is in part an experiment in typography and layout by the author, as Duguid describes in detail in this article, that is unusual and atypical to the extreme, so I think many of the characterizations of the Google Books project, based on this one artifact, are unfair and short-sighted. When you strip away the false dichotomy of innovative-or-inherited-quality, the oddities surrounding the Tristram Shandy artifact, and various unnecessary pot-shots (("A quick look at the online catalogue for Stanford’s library shows that the Stanford volume presented as your second choice by Google Books is actually tucked away in the Stanford Auxiliary library along with “infrequently–used” texts.")) Duguid's analysis does point to some apparent problems with Google's scheme for digitizing and indexing books. The quality of some of the scans pointed out in the Tristram Shandy artifact and others are sources of concern. Substandard metadata is another:Not a word is mentioned about multiple volumes or volume number. Indeed, a quick survey of the Google Book Project suggests that Google doesn’t recognize volume numbers. Not only are the different editions (Harvard’s from 1896, Stanford’s from 1904) given exactly the same name, but also the different volumes of this Stanford’s multivolume edition are labeled identically. Consequently, whatever algorithm Google uses to find the book, it is quite likely, as in this case, to offer volume II first.</p>Reservations aside, it is a good review the some of the problematic outcomes of the Google Books Library Project.The text was modified to remove a link to http://www.gesis.org/IZ/Mayr/ on November 6th, 2012.

- 2007-08-15T20:34:29+00:00

- 2018-01-15T20:28:01+00:00

Analysis of Google Scholar and Google Books

Two papers were published recently exploring the quality of Google Scholar and Google Books.

Google Scholar

Philipp Mayr and Anne-Kathrin Walter, both of GESIS / Social Science Information Center in Bonn, Germany, uploaded an article to arXiv called "An exploratory study of Google Scholar." ((Judging from the citation listed on Philipp Mayr's homepage, the article will appear in an upcoming issue of Online Information Review from Emerald Group Publishing.)) Originally created as a presentation for a 2005 conference, it was updated in January 2007 to reflect new findings and published as a paper. Excerpts from the abstract include:

The study shows deficiencies in the coverage and up-to-dateness of the [Google Scholar] index. Furthermore, the study points up which web servers are the most important data providers for this search service and which information sources are highly represented. We can show that there is a relatively large gap in Google Scholar’s coverage of German literature as well as weaknesses in the accessibility of Open Access content. Major commercial academic publishers are currently the main data providers.We conclude that Google Scholar has some interesting pros (such as citation analysis and free materials) but the service can not be seen as a substitute for the use of special abstracting and indexing databases and library catalogues due to various weaknesses (such as transparency, coverage and up-to-dateness).

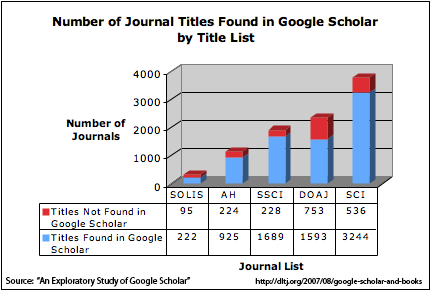

The authors performed a "brute force analysis" (their words) of the coverage of Google Scholar by comparing search results by journal title with five journal lists: ISI Arts & Humanities Citation Index, ISI Social Science Citation Index, ISI Science Citation Index, open access journals listed by DOAJ, and journals from the SOLIS database (mainly German-language journals from sociological disciplines). They queried Google Scholar using the "Return articles published in..." limiter on the advanced search screen, downloaded the first 100 records for each title, then parsed and analyzed each of the records. In total, 621,000 records from Google Scholar search results were analyzed.

The authors first determined the coverage of titles in the five journal lists in the Google Scholar database. The authors note surprise at the relative lack of coverage for open access titles listed in the DOAJ. I think this can be explained by the fact that many open access publishers are not using a systematic application to put their content on the internet. Of the 2,804 journals in the DOAJ directory, only 846 are searchable via DOAJ's own article-level indexing service. ((Numbers from the DOAJ home page, as of 15-Aug-2007.)) If the journals can't be easily harvested at the article level, then they Google can't add them to the Scholar article index.

The authors first determined the coverage of titles in the five journal lists in the Google Scholar database. The authors note surprise at the relative lack of coverage for open access titles listed in the DOAJ. I think this can be explained by the fact that many open access publishers are not using a systematic application to put their content on the internet. Of the 2,804 journals in the DOAJ directory, only 846 are searchable via DOAJ's own article-level indexing service. ((Numbers from the DOAJ home page, as of 15-Aug-2007.)) If the journals can't be easily harvested at the article level, then they Google can't add them to the Scholar article index.

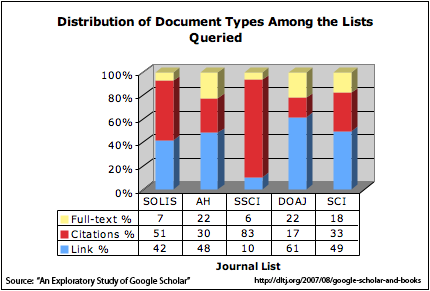

Based on the semantics provided in each record, the authors divided the results into three categories (referred to in the paper as "document types"): links to complete descriptive records on an external (publisher's or aggregator's) site, citation-only records (no full-text and no link to more complete information at an external site), and direct access links to full text. The distribution of results is shown in the table to the right.

Based on the semantics provided in each record, the authors divided the results into three categories (referred to in the paper as "document types"): links to complete descriptive records on an external (publisher's or aggregator's) site, citation-only records (no full-text and no link to more complete information at an external site), and direct access links to full text. The distribution of results is shown in the table to the right.

The paper also includes an analysis of the various publisher and portal sites that supply information to Google Scholar's index.

Google Books

The August issue of First Monday contains an article by Paul Duguid called "Inheritance and loss? A brief survey of Google Books". The article is a somewhat contrived exploration of the Google Books Library Project through his lens of quality assurance derived "through innovation or through 'inheritance.'" His thesis seems to be that users expect the reputations of the libraries participating in the project (Harvard, University of Michigan, New York Public, Stanford, and Oxford among the other partners are arguably a reputable group) convey a level of quality to the results of the digitization process in the Google Books Library Project. Duguid then goes on to pick what arguably has to be the hardest book artifact to capture digitally (various editions of Laurence Sterne's "The Life and Opinions of Tristram Shandy, Gentleman") as an example of everything that is wrong with Google Books.

I don't subscribe to that notion at all, but it is perhaps because I've been around enough technology and innovation to know that each new service needs to stand on its own. Tristram Shandy is in part an experiment in typography and layout by the author, as Duguid describes in detail in this article, that is unusual and atypical to the extreme, so I think many of the characterizations of the Google Books project, based on this one artifact, are unfair and short-sighted. When you strip away the false dichotomy of innovative-or-inherited-quality, the oddities surrounding the Tristram Shandy artifact, and various unnecessary pot-shots (("A quick look at the online catalogue for Stanford’s library shows that the Stanford volume presented as your second choice by Google Books is actually tucked away in the Stanford Auxiliary library along with “infrequently–used” texts.")) Duguid's analysis does point to some apparent problems with Google's scheme for digitizing and indexing books. The quality of some of the scans pointed out in the Tristram Shandy artifact and others are sources of concern. Substandard metadata is another:

Not a word is mentioned about multiple volumes or volume number. Indeed, a quick survey of the Google Book Project suggests that Google doesn’t recognize volume numbers. Not only are the different editions (Harvard’s from 1896, Stanford’s from 1904) given exactly the same name, but also the different volumes of this Stanford’s multivolume edition are labeled identically. Consequently, whatever algorithm Google uses to find the book, it is quite likely, as in this case, to offer volume II first.

Reservations aside, it is a good review the some of the problematic outcomes of the Google Books Library Project.

The text was modified to remove a link to http://www.gesis.org/IZ/Mayr/ on November 6th, 2012.